Dell Generative AI Solutions

In July of this year, Dell Generative AI Solutions was introduced. For businesses looking for a secure, high-performance, and tried-and-true architecture for deploying large language models (LLM), Dell offers a modular, full-stack architecture. A seismic shift in IT planning has been brought about by the unexpected demand for generative AI (GenAI) in the workplace, and this transition is still having an impact on the sector. The need for GPU-accelerated servers, which power the computationally demanding training and inferencing of GenAI workflows, has consequently skyrocketed.

The core of Dell Generative AI Solutions is the market-leading portfolio of GPU-accelerated servers, workstations, storage, and networking from Dell. This is combined into tried-and-true AI infrastructure designs with NVIDIA AI Enterprise in the Dell Validated Design for Generative AI with NVIDIA.

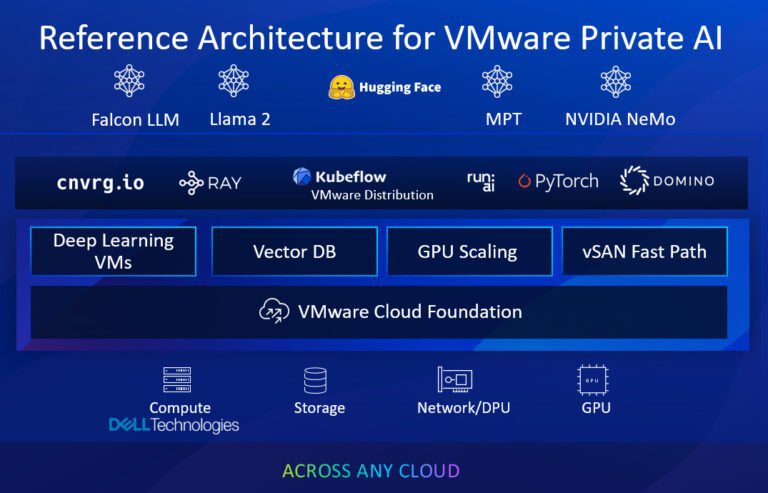

LLM training and inferencing, which are compute-intensive, call for best-of-breed infrastructure for the data center, backed by knowledgeable professional services, and strict certification using business software solutions. The introduction of VMware Private AI powered by NVIDIA at VMware Explore is another evidence of Dell’s best-of-breed strategy for GenAI products. Customers of Dell now have even more choices for managing IT resources and GenAI infrastructure. VMware and NVIDIA aim to democratize GenAI and spark business innovation for all companies with the release of VMware Private AI.

For businesses looking to incorporate AI solutions into their private cloud or data center, the new platform for GenAI with NVIDIA AI Enterprise and VMware Cloud FoundationTM software, when deployed on Dell PowerEdge servers or VxRail systems, delivers on generative AI. Additionally, MLOps platforms like cnvrg.io, Domino, Kubeflow, and run:ai are supported by VMware Private AI.

Organizations can quickly adopt and deploy GenAI at scale using well-known tools for managing virtual machines, containers, and hybrid cloud infrastructure thanks to the VMware Private AI Foundation powered by NVIDIA. Organizations may quicken the delivery of business value by integrating NVIDIA AI Enterprise with the adaptability and scalability of the VMware Cloud Foundation, powered by Dell PowerEdge servers.

Why NVIDIA is Used by the VMware Private AI Foundation?

Organizations may avoid silos in their company IT infrastructure using VMWare Private AI, which lowers the administration hassles, security concerns, and skills gaps that might arise when handling several software stacks. Running generative AI solutions in a typical vSphere or VMware cloud foundation environment combines generative AI’s tremendous promise with VMware’s established management and administration. This solution also enables IT to:

the freedom of decision. Organizations have the freedom to select the AI software that best suits their needs thanks to a modular architecture that supports a variety of AI software.

optimism about deployments. With GenAI designs that have been validated, you can speed up innovation and business change. minimize AI risks including model accuracy and data and IP leaks.

Exceptional performance. The platform supports a number of GPU technologies and GPU pooling, which can deliver performance that is on par with bare metal.

an increase in production. Automate manual processes and repetitive chores to free up staff time for more strategic work.

The Enterprise’s GenAI

The VMware Private AI Foundation Platform powered by NVIDIA is intended to maximize the value of data while enabling teams to concentrate on high-value strategic tasks. More accurate results and a competitive advantage for your company can be achieved with GenAI LLM customisation and training that is personalized to your needs. use scenarios consist of:

Generation of code: Create code to aid developers in accelerating their workflow

Resolution at a contact center: Enhance the effectiveness and precision of customer service interactions

Development and management of content: Text, web material, graphics, and video creation and curation

Automation of IT activities: Automate repetitive IT processes to cut expenses and boost productivity.

Information retrieval technology: efficiently and quickly locate information.

Sales: Utilize chatbots with advanced content generating skills.

DGAI Solutions by Dell

With a portfolio that includes client devices, data centers, and cloud services all in one location, Dell has the largest GenAI infrastructure in the world.¹

Powerful and dependable, Dell PowerEdge servers enable a number of configurations that are perfect for GenAI applications. The Dell PowerEdge portfolio includes a range of servers, from the PowerEdge R760xa, which is a general-purpose server with PCIe-based GPUs like the NVIDIA L40s for GenAI customization and inferencing, to the PowerEdge XE9680, which is a specialized server with the most recent eight-way NVIDIA H100 Tensor Core SXM GPUs for intensive LLM training workloads.

Enterprises have a new path forward thanks to solutions from Dell Technologies, VMware, and NVIDIA that enable GenAI throughout the company. The businesses are continuously cross-validating and certifying the integrated technology. For simple integration and deployment of VMware products, Dell additionally maintains a firmware catalog and customized images of VMware ESXi operating system releases.

The journey to VMware private and hybrid cloud solutions for GenAI is expedited by Dell VxRail hyperconverged infrastructure (HCI) on VxRail.

Supporting mixed generation node support on the only jointly developed HCI with VMware, handle demanding workloads with thousands of configuration choices.

Provide automated lifecycle management for the full stack, including NVIDIA GPUs and the hypervisor.

For an easy-to-deploy and manage GenAI workloads, VxRail was one of the first HCI systems to be approved for NVIDIA AI Enterprise.

Growing Artificial Intelligence Solutions

With the support of a growing portfolio of Dell Validated Designs and services for AI, Dell Technologies, VMware, and NVIDIA can assist businesses in swiftly and efficiently implementing AI. These remedies consist of:

Virtualized environments with AI: In this configuration, AI workloads are deployed on virtualized infrastructure for Dell PowerEdge servers and VxRail HCI using VMware Cloud Foundation and NVIDIA AI Enterprise.

AI creation using NVIDIA: This solution offers great performance and scalability for AI applications by utilizing NVIDIA AI Enterprise on Dell PowerEdge servers.

[…] NVIDIA and Meta have plans to speed up the development of general artificial intelligence by rapidly expanding data centers; they will be demanding more DRAM supply from SK hynix […]

[…] that may be used for the training and operating of artificial intelligence models is provided by the NVIDIA and Dell AI Starter Kit, which is a bundle of hardware and software. It comes with NVIDIA’s artificial […]

[…] kinds, ranging from text and pictures to videos and audio, has been developed in response to the genAI […]

[…] market, NTT claims it is establishing an ecosystem with partners like Celona, Cisco, Microsoft, and VMware. According to Cisco, a private 5G network as a service offers an alternative to purchasing, […]

[…] ways to lift and convert your current VMware estate into Google Cloud is with the Google Cloud VMware Engine. With special features like a 4 nines uptime SLA in a single zone, 100 Gbps of dedicated east-west […]

[…] AI Integration with ASRock AI QuickSet […]