LLM applications in Azure AI content safety

Use Prompt Shields to protect your LLMs against prompt injection attacks

Indirect and direct prompt injection attacks also referred to as jailbreaks are becoming major dangers to the security and safety of foundation models. Successful assaults that get beyond an AI system’s security safeguards might have disastrous repercussions, such the disclosure of intellectual property (IP) and personally identifiable information (PII).

Microsoft created Prompt Shields to counter these risks by blocking erroneous inputs before they can reach the foundation model and detecting them in real time. The integrity of user interactions and large language model (LLM) systems is protected by this proactive approach.

Quick Defence Against Jailbreak Attacks:

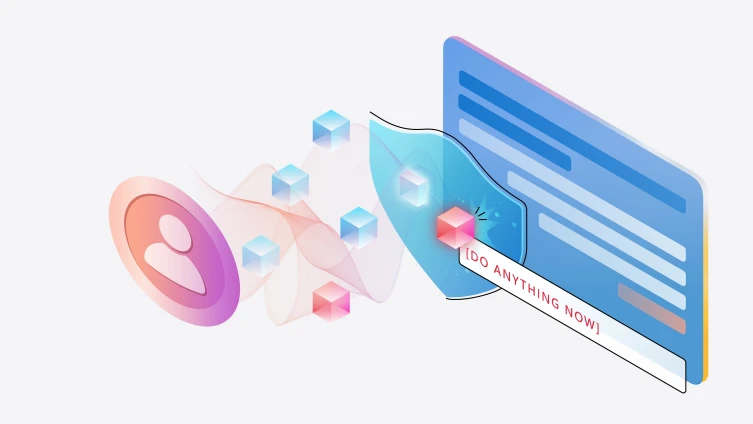

Attacks known as “jailbreak,” “direct prompt attacks,” or “user prompt injection attacks” involve people tampering with prompts to introduce malicious inputs into LLMs and change their behaviours and results. A “DAN” (Do Anything Now) attack is one kind of jailbreak command that may fool the LLM into creating improper material or disobeying limitations set by the system. Released as “jailbreak risk detection” in November of last year, Azure’s Prompt Shield for jailbreak assaults identifies and prevents these attacks by examining prompts for dangerous instructions.

Shadow of the Prompt for Indirect Attacks:

While less well-known than jailbreak assaults, indirect prompt injection attacks provide a distinct hazard and problem. Hackers use these covert assaults to change input data such as webpages, emails, or uploaded documents in an effort to subtly influence AI systems. This makes it possible for hackers to deceive the foundation model into carrying out illicit operations without really altering the prompt or LLM. It may result in account takeover, harassing or libellous material, and other malevolent acts. In order to protect the security and integrity of your generative AI applications, we are adding a Prompt Shield for indirect attacks to counter this. This shield is meant to identify and prevent these covert assaults.

LLM hallucinations may be identified via groundedness detection

In generative AI, the term “hallucinations” describes situations in which a model reliably produces outputs that defy logic or don’t have supporting evidence. This problem might show itself as a variety of things, from little errors to glaringly incorrect results. Enhancing the reliability and quality of generative AI systems requires the ability to recognise hallucinations. Microsoft is releasing Groundedness Detection today, a new tool intended to detect hallucinations based on text. This function improves the quality of LLM outputs by identifying “ungrounded material” in text.

Orient your application with a strong safety system alert

Prompt engineering is one of the most effective and widely used methods for enhancing the dependability of a generative AI system, in addition to the installation of safety mechanisms like Azure AI Content Safety. With Azure AI, customers can now root foundation models on reliable data sources and create system messages that direct behaviour overall and the best use of the grounding data (do this, not that). At Microsoft, we’ve discovered that even little modifications to a system message may have a big influence on the security and quality of an application.

Azure will soon provide safety system message templates right in the Azure AI Studio and Azure OpenAI Service playgrounds by default, to assist users in creating efficient system messages. These templates, which were created by Microsoft Research to reduce the creation and abuse of dangerous material, may assist developers in producing high-caliber apps more quickly.

Examine the hazards and safety aspects of your LLM application

How can you tell if your mitigations and application are performing as planned? In order to securely go from prototype to production, many organisations today lack the resources necessary to stress test their generative AI systems. First off, creating a high-quality test dataset that accurately captures a variety of novel and developing threats, including jailbreak assaults, may be difficult. Evaluations may be a laborious, manual process even with high-quality data, and development teams may find it challenging to understand the findings in a way that will help them implement efficient mitigations.

Before deploying to production, Azure AI Studio offers reliable, automated assessments to assist enterprises in methodically evaluating and refining their generative AI applications.

In the quickly changing field of generative AI, company executives are attempting to find the ideal equilibrium between creativity and risk mitigation. The use of prompt injection attacks, in which malevolent actors attempt to trick an AI system into doing an action that is not part of its original purpose like generating malicious material or obtaining private information has become a prominent problem.

Azure AI content safety

Organisations worry about quality and dependability in addition to reducing these security threats. In order to avoid undermining user confidence, they want to make sure that their AI systems are not producing mistakes or adding data that isn’t supported by the application’s data sources.

Azure is introducing new tools for generative AI app developers that are either now available in Azure AI Studio or will be available shortly to assist them tackle these problems related to AI quality and safety:

- Prompt Shields to identify and prevent prompt injection attacks, which will soon be available in preview in Azure AI Content Safety. These shields include a new model to recognize indirect prompt assaults before they affect your model.

- Soon to be released: groundedness detection to identify “hallucinations” in model outputs.

Soon to come: safety system signals to direct your model’s behaviour towards responsible, safe outputs. - Safety assessments, currently available in preview, determine how vulnerable an application is to content generation hazards and jailbreak assaults.

- Risk and safety monitoring is currently available in preview in the Azure OpenAI Service. It aims to determine which model inputs, outputs, and end users are triggering content filters to inform mitigations, which are scheduled to be implemented shortly.

Azure AI now offers our clients cutting-edge tools to protect their apps across the generative AI lifecycle with these new enhancements.