What is fivetran?

Fivetran is a modern, cloud-based automated data movement platform, designed to offer organizations the ability to effortlessly extract, load and transform data between a wide range of sources and destinations.

Is fivetran opensource?

In 2012, Fivetran was developed as a managed, closed-source ELT solution.

Businesses require quicker data analysis and predictive insights in today’s dynamic environment in order to recognize and handle fraudulent transactions. Generally speaking, combating fraud using data engineering and machine learning involves these essential steps:

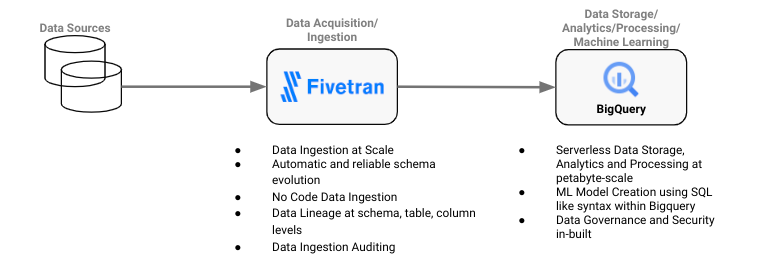

Data acquisition and ingestion: To ingest and store the training data, pipelines across multiple, disparate sources (file systems, databases, third-party APIs) are established. The development of machine learning algorithms for fraud prediction is facilitated by the abundance of valuable information present in this data.

Data analysis and storage: To store and process the ingested data, use an enterprise cloud data platform that is scalable, dependable, and high-performing.

Development of machine-learning models: Creating training sets from stored data and applying machine learning models to it in order to create predictive models that can distinguish between authentic and fraudulent transactions.

When developing data engineering pipelines for fraud detection, the following issues frequently arise:

Scale and complexity: When an organization uses data from multiple sources, ingesting that data can be a challenging process. Building internal ingestion pipelines can take weeks or months of data engineering resources, taking important time away from primary data analysis tasks.

Maintenance and administrative work: Manual data administration and storage, including cluster sizing, data governance, backup and disaster recovery, and data storage, can seriously impede business agility and postpone the creation of insightful data.

Steep skill requirements and learning curve: Implementing and utilizing fraud detection solutions can take a lot longer when a data science team is assembled to build machine learning models and data pipelines.

Three key themes need to be prioritized in order to effectively address these challenges: time to value, design simplicity, and scalability. These can be handled by utilizing BigQuery for sophisticated data analytics and machine learning capabilities, and Fivetran for data movement, ingestion, and acquisition.

Fivetran streamlines data integration

Unless you happen to be living it and dealing with it on a daily basis, it’s easy to underestimate the challenge of reliably persisting incremental source system changes to a cloud data platform. Their DB2 source required the addition of a new column, which set off an arduous process that took six months to complete before the change appeared in their analytics platform.

The company’s capacity to supply downstream data products with the most recent and accurate data was severely impeded by this delay. As such, any modification to the data structure of the source caused lengthy and inconvenient outages for the analytics process. The company’s data scientists were forced to sort through out-of-date and incomplete data.

To create efficient fraud detection models, all of their data had to be:

Curated, contextual: The data must be of the highest caliber, credible, transparent, and reliable, and it must also be unique to their use case.

Timely and accessible: Data must be high-performing, constantly available, and provide seamless access with well-known downstream data consumption tools.

The company selected Fivetran primarily because of its ability to handle schema drift and evolution from multiple sources to their new cloud data platform in an automated and dependable manner. Fivetran’s more than 450 source connectors enable the creation of datasets from a variety of sources, such as files, events, databases, and applications.

The decision changed the game. Fivetran’s reliable supply of high-quality data allowed the company’s data scientists to focus on quickly testing and improving their models, which helped them get closer to prevention by bridging the knowledge gap between insights and action.

The fact that Fivetran automatically and dependably normalized the data and handled any necessary changes from any of their on-premises or cloud-based sources as they moved to the new cloud destination was crucial for this company. Among them were:

- Modifications to the schema (including additions)

- Table modifications (adds, deletes, etc.) within a schema

- A table’s columns can be added, deleted, soft deleted, and so forth.

- Data type mapping and transformation (with SQL Server serving as an example source)

The company chose a dataset for a new connector by simply telling Fivetran how they wanted changes to the source system to be handled; no coding, configuration, or customization was needed. Based on particular use case requirements, Fivetran set up and automated this process, allowing the client to decide how often changes would be moved to their cloud data platform.

Hoe does Fivetran replicate databases

Beyond DB2, Fivetran showed that it could handle a broad range of data sources, including other databases and a number of SaaS applications. Big data sources, particularly relational databases, could handle substantial incremental change volumes with Fivetran. The current data engineering team was able to grow without adding more employees thanks to Fivetran’s automation. Business lines were able to start connector setup with appropriate governance and security measures in place thanks to Fivetran’s simplicity and ease of use.

Complete data provenance and good governance are essential in the context of financial services companies. These issues are addressed by the newly released Fivetran Platform Connector, which offers quick, easy, and nearly instantaneous access to rich metadata related to any Fivetran connector, destination, or even the entire account. End-to-end visibility into metadata (26 tables are automatically created in your cloud data platform – see the ERD here) for the data pipelines is provided by the Platform Connector, which has no Fivetran consumption costs. These pipelines include:

- Source and destination lineage: schema, table, and column

- Volumes and usage

- types of connectors

- Records

- Roles, teams, and accounts

Financial services companies can better understand their data thanks to this increased visibility, which builds confidence in their data initiatives. It is a useful instrument for supplying data provenance and governance, which are essential components when discussing financial services and the data applications they use.

The scalable and effective data warehouse of BigQuery for fraud detection

BigQuery is an affordable, serverless data warehouse that is efficient and scalable, which makes it a good choice for enterprise fraud detection. Because of its serverless architecture, data teams can concentrate on data analysis and fraud mitigation techniques by reducing the amount of infrastructure setup and maintenance that is required.

Some of BigQuery’s main advantages are:

Faster generation of insights: BigQuery enables quick identification of fraudulent patterns and rapid data exploration by running ad hoc queries and experiments without capacity constraints.

Scalability on demand: BigQuery’s serverless architecture automatically adjusts its size in response to demand, preventing overprovisioning and guaranteeing that resources are available when needed. Data teams will no longer have to manually scale their infrastructure, which can be laborious and prone to mistakes. Understanding that BigQuery can scale while the queries are running or in-flight is crucial because it sets it apart from other contemporary cloud data warehouses.

Data analysis: BigQuery datasets are capable of storing and analyzing financial transaction data at nearly infinite scale, thanks to their ability to scale to petabytes. This gives you the ability to find hidden trends and patterns in your data for efficient fraud detection.

Machine learning: Using straightforward SQL queries, BigQuery ML provides a variety of pre-made fraud detection models, ranging from anomaly detection to classification. This facilitates quick model development for your unique requirements and democratizes machine learning. Here is a list of the various model types that BigQuery ML supports.

Model deployment for large-scale inference: Google Cloud’s Vertex AI can be used to make predictions in real-time on streaming financial data, even though BigQuery only supports batch inference. Use Vertex AI to deploy your BigQuery ML models and receive instant insights and actionable alerts that will protect your company in real time.

Fivetran and BigQuery work together to solve a complex problem with a straightforward design: a fraud detection tool that can produce actionable alerts in real time. In the upcoming blog series, we’ll concentrate on developing ML models in BigQuery that can precisely predict fraudulent transactions and implementing the Fivetran-BigQuery integration in a hands-on manner using an actual dataset.