A CPU cache is a hardware or software component in computing that stores data so that requests for it in the future can be processed more quickly. The data that is cached may be the output of a previous computation or a copy of data that is stored elsewhere. When the requested data can be located in a cache, it is named a cache hit; when it cannot, it is named a cache miss. The more requests that can be comfortable from the cache, the faster the system operates because cache hits are comfortable by accessing data from the cache, which is speedier than adjusting a result or reading from a slower data store.

Caches must be relatively small to be affordable and to allow for efficient data utilization. But nevertheless, because typical computer applications access data with a high degree of locality of reference, caches have proven themselves in many areas of computing. Such access patterns show spatial proximity, where data is stored physically close to data that has already been requested, and temporal locality, where material is sought that has been recently requested.

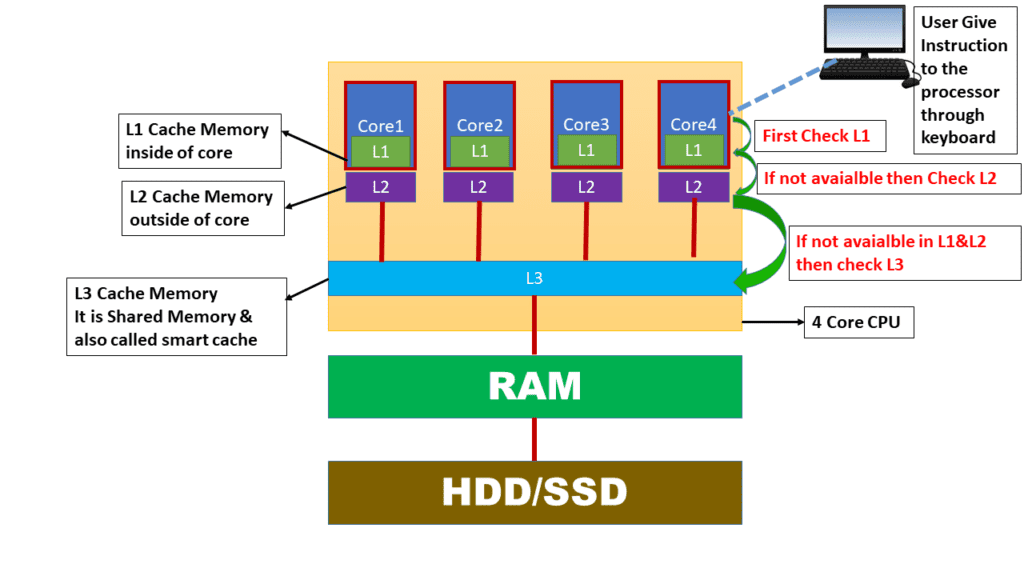

The underlying trade-off between cache latency and hit rate is another problem. Better hit rates and longer latency can be found with larger caches. Many computers employ multiple levels of caching to solve this trade-off, with small, quick caches being backed up by bigger, slower caches. In general, multi-level caches work by first testing the quickest cache, level 1 (L1); if it matches, the processor moves forward quickly. Before accessing external memory, the next quickest cache, level 2 (L2), is tested if the smaller cache misses.

What is L1 Cache CPU

The primary cache is also known as the level 1 cache (L1 cache), which is a memory cache that is physically integrated into the microprocessor and used to store data that has recently been retrieved by the CPU. It is also known as the system cache or the internal cache. See the Image for a better understanding

Since L1 cache is the most costly cache amongst some of the CPU caches, it is also the fastest cache memory because it is already integrated into the chip with a zero wait-state interface. Its size is constrained nonetheless. It serves as the first cache to be accessed and processed when the processor itself executes a computer instruction. It is used to store data that has recently been accessed by the processor as well as urgently needed files.

What is L2 Cache CPU

A level 2 cache (L2 cache) is a CPU cache memory that is external to and separate from the microprocessor chip core. See the Image for a better understanding. Early L2 cache designs had them mounted on the motherboard, which made them incredibly sluggish.

Modern CPUs frequently have L2 caches in their microprocessor designs, even if they may not be as quick as the L1 cache. However, because it is located outside of the core, its size can be expanded, and it is still faster than main memory.

What is Smart Cache in CPU

The CPU uses a Level 3 (L3) cache, which is a customized cache that is normally incorporated into the motherboard or, in the case of some unique CPUs, the CPU module itself. It collaborates with the L1 and L2 cache to boost computer speed by avoiding bottlenecks brought on by excessively lengthy fetch and execute cycles. Information is fed from the L3 cache to the L2 cache, which then passes it on to the L1 cache. Its memory performance is often slower than that of the L2 cache but still faster than that of the main memory (RAM). Some designs place the L3 on the CPU die.

The CPU searches the L1 to L3 cache for the data it need. If L1 is unable to provide this information, L2 and L3, the largest and slowest in the group, are then searched. Depending on how the CPU is constructed, the L3 serves a variety of purposes. Occasionally, the L3 stores duplicates of instructions that are often used by all of the cores that share it. The majority of contemporary CPUs come with integrated L1 and L2 caches for each core and share a single L3 cache on the motherboard, while some designs place the L3 directly on the CPU die.