AMD ROCm 6.1

With the AMD ROCm 6 open-source software platform, AMD hopes to maintain its commitment to open-source and device-independent solutions while creating an environment that maximises the performance and potential of AMD Instinct accelerators. Consider ROCm 6 as the link that will allow your most ambitious AI concepts to be implemented successfully. In the current market, it gives developers the opportunity to create at their own speed, testing and deploying applications across a wide range of GPU architectures, and it delivers outstanding interoperability with key industry frameworks.

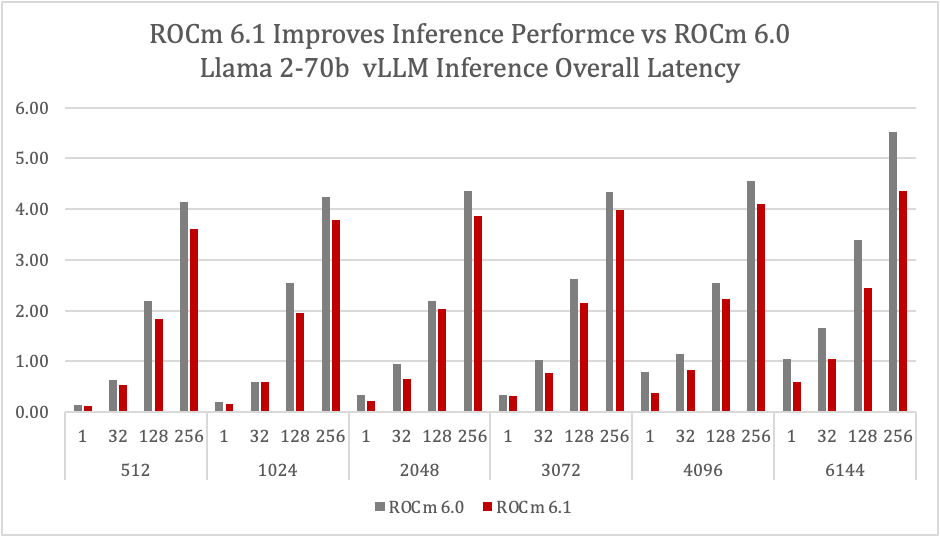

The most recent platform upgrade from AMD, ROCm 6.1, adds a host of new features for both academics and developers. In order to stay up with the quick developments in AI frameworks, AMD will examine how ROCm 6.1 builds on the fundamental advantages of ROCm 6 by supporting the most recent AMD Instinct and Radeon GPUs, boosting optimisations across a wide range of computational domains, and extending ecosystem support. The goal of ROCm 6.1’s new features and updates is to enhance application performance and reliability so that AI and HPC developers can push the boundaries of what is feasible.

Presenting rocDecode, a video processing tool

Thanks to the new ROCm library, AMD GPUs now have high-performance video decoding capabilities directly on the GPU thanks to the Video Core Next (VCN) specialised media engines. These hardware-based decoders are effective at handling video streams.

By enabling direct decoding of compressed video into visual memory, rocDecode reduces the amount of data transferred via the PCIe bus and gets rid of typical bottlenecks in video processing. With real-time applications like video scaling, colour conversion, and augmentation which are critical for advanced analytics, inferencing, and machine learning training this feature enables rapid post-processing with the ROCm HIP framework.

The efficiency and scalability of video decoding activities are maximised with rocDecode. The API fully utilises all of the VCNs on a GPU device by permitting the development of numerous decoder instances that can run concurrently. The capacity to process in parallel ensures that even large-volume video streams can be simultaneously decoded and processed. To put it succinctly, rocDecode strengthens the video processing pipeline, providing power efficiency and performance increases that are necessary for contemporary AI and HPC applications.

MIGraphX adds Flash Attention and PyTorch backend

The AMD graph inference engine is called MIGraphX. MIGraphX is a command-line programme called migraphx-driver and is available through C++ and Python APIs. Its purpose is to speed up deep learning neural networks. Because of this flexibility, developers may incorporate sophisticated model inference features into their applications with ease.

With support for Flash Attention, which increases the memory efficiency of well-known models like BERT, GPT, and Stable Diffusion, ROCm 6.1 enhances performance for transformer-based models and contributes to the faster, more power-efficient processing of complicated neural networks.

A new Torch-MIGraphX library is also included in ROCm 6.1, allowing the PyTorch workflows to directly incorporate MIGraphX capabilities. It defines an immediate-use “migraphx” backend for the torch.compile API. A variety of data types, such as FP32, FP16, and INT8, are supported by the Torch-MIGraphX library to meet various computing requirements.

Better MIOpen Library performance

AMD’s open-source MIOpen deep learning primitives library is made especially to improve GPU performance. It has a full suite of tools to maximise GPU launch overheads and memory bandwidth using cutting-edge methods like fusion and auto-tuning infrastructure. This infrastructure adapts algorithms to optimise convolutions for different filter and input sizes, and it handles a wide range of issue setups efficiently.

The goal of MIOpen’s most recent upgrades is to improve performance, especially for convolutions and inference. ROCm 6.1 features Find 2.0 fusion plans, which are intended to maximise system resource utilisation and enhance the library’s capacity to carry out inference jobs more effectively. The convolution kernels for the Number of samples, Height, Width, and Channels (NHWC) format have been enhanced by AMD. The new heuristics especially optimise efficiency for this format, allowing better handling and processing of convolution operations across multiple applications. NHWC prioritises the height and width dimensions, followed by channels.

New Composable Kernel Library Architecture Support

The Composable Kernel (CK) library now has expanded architecture support thanks to ROCm 6.1, providing extremely effective capabilities on a larger variety of AMD GPUs. The addition of stochastic rounding to the FP8 rounding mechanism is a major update in this version. By simulating more realistic data behaviour, this rounding technique improves model convergence and provides a more accurate and dependable means of handling data in machine learning models.

Enlarged hipSparse Computations using SPARSELt

To speed up deep learning tasks, ROCm 6.1 adds extensions to hipSPARSELt that allow structured sparsity matrices. Support for configurations in which ‘B’ denotes the sparse matrix and ‘A’ the dense matrix in Sparse Matrix-Matrix Multiplication (SPMM) is noteworthy in this release. The library’s capabilities were previously restricted to multiplications where the sparse matrix was represented by the letter “A” and the dense matrix by the letter “B.” This addition expands the library’s capabilities. The performance and versatility of SPMM operations are improved by support for various matrix configurations, which further optimises deep learning computations.

Higher-Level Tensor Functions using hipTensor

The AMD-specific C++ library hipTensor uses the Composable Kernel Library’s primitives to speed up tensor operations. hipTensor was created by AMD to take advantage of general-purpose kernel languages like HIP C++. In cases where complicated tensor computations are needed, hipTensor optimises the way tensor primitives are executed.

HipTensor’s most recent version adds support for 4D tensor contraction and permutation. A critical operation in many tensor-based computations, permutations on 4D tensors can now be efficiently carried out by users with ROCm 6.1. 4D contractions for F16, BF16, and Complex F32/F64 data formats are now supported by the library. With this additional functionality, hipTensor can now optimise a wider range of operations, enabling more complicated and varied manipulations of tensor data many of which are necessary for sophisticated computing activities like training neural networks and running complex simulations.

AMD wants to provide you with the newest in high-performance computing through the ROCm platform. Every upgrade in ROCm 6.1 has been created to increase productivity, optimise processes, and assist you in reaching your objectives more quickly by offering useful, strong tools that unleash your creative potential.

Benchmark Graph Systems:

| ROCm 6.1 | ROCm 6.0 |

| MI300X Supermicro BKC 24.07.06 MI300X-NPS1-SPX-192GB-750W ROCm 6.1.0 Container | MI300X Banff, BKC X24.05.00 MI300X-None-None-192GB-750W ROCm 6.0 Container |