Nvidia’s H200 GPU will power next-generation AI exascale supercomputers with 4.1GB of HBM3e and 4.8 TB/s bandwidth

The H200 and GH200 product lines were unveiled by Nvidia this morning at Supercomputing 23. Adding extra memory and processing capability to the Hopper H100 architecture, they are the most powerful processors Nvidia has ever produced. The next generation of AI supercomputers, expected to have over 200 exaflops of AI computing online by 2024, will be powered by these. Now let’s examine the specifics.

Maybe the true star of the show is the H200 GPU. Although Nvidia did not give a comprehensive analysis of all the specs, a significant increase in memory capacity and bandwidth per GPU seems to be the main highlight.

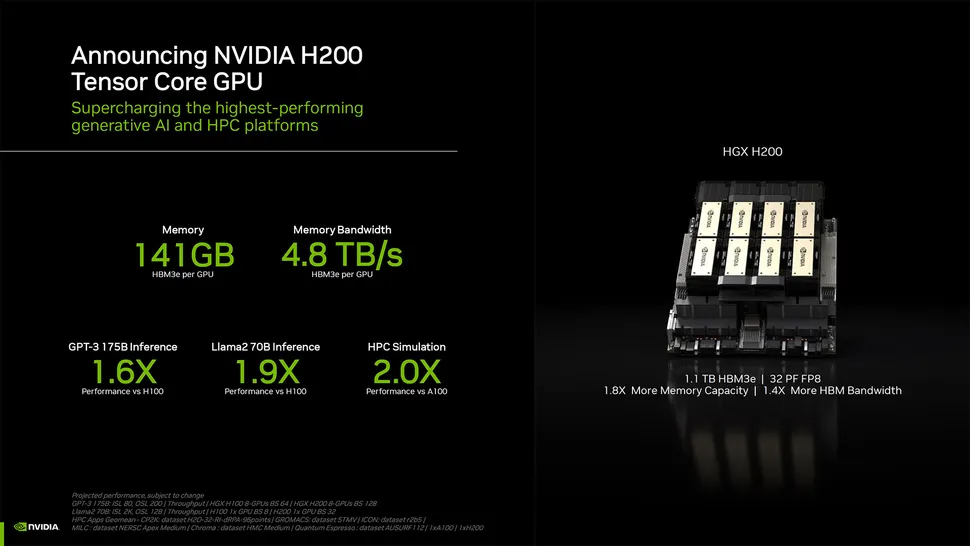

With six HBM3e stacks and 141GB of total HBM3e memory, the upgraded H200 GPU can operate at an effective 6.25 Gbps, providing 4.8 TB/s of total bandwidth per GPU. Compared to the original H100, which had 3.35 TB/s of bandwidth and 80GB of HBM3, it is a huge boost. Some H100 versions did come with greater memory, such as the H100 NVL, which coupled two boards and offered an overall 188GB of memory (94GB per GPU). However, the new H200 SXM has 43% more bandwidth and 76% more memory capacity than the H100 SXM model.

Take note that the raw computing performance seems to have not altered much. “32 PFLOPS FP8” was the overall performance of an eight GPU HGX 200 system, which was the only graphics Nvidia displayed for computation. Eight of these GPUs have already produced around 32 petaflops of FP8, because the original H100 delivered 3,958 teraflops of FP8.

In comparison to H100, how much quicker will H200 GPU be? The workload will determine that. Nvidia promises up to 18X greater performance than the original A100 for LLMs like GPT-3, which substantially benefit from additional memory capacity; in contrast, the H100 is just approximately 11X quicker. The future Blackwell B100 is also hinted at, however for now it only consists of a taller bar that fades to black.

Of course, this goes beyond just revealing the new H200 GPU. Additionally, a new GH200 that combines the Grace CPU and H200 GPU is in the works. A total of 624GB of memory will be included in each GH200 “superchip“. The new GH100 employs the previously mentioned 144GB of HBM3e memory, while the original GH100 paired 480GB of LPDDR5x memory for the CPU with 96GB of HBM3 memory.

Once again, there are little information about whether the CPU side of things has changed. However, Nvidia offered some comparisons between the GH200 and a “modern dual-socket x86” setup; note that the speedup was mentioned in relation to “non-accelerated systems.”

What is meant by that? Given how quickly the AI field is developing and how often new advancements in optimizations appear, we can only infer that the x86 servers were running code that was not completely optimized.

New HGX H200 systems will also employ the GH200. The last topic of discussion is the new supercomputers that will be powered by GH200. These are claimed to be “seamlessly compatible” with the current HGX H100 systems, meaning HGX H200 can be used in the same installations for increased performance and memory capacity without having to rework the infrastructure.

One of the first Grace Hopper supercomputers to go online in the next year is probably the Swiss National Supercomputing Center’s Alps supercomputer. It continues to utilize GH100. The Venado supercomputer at Los Alamos National Laboratory will be the first GH200 system operational in the United States. Grace CPUs and Grace Hopper superchips, which were unveiled today, will also be used in the Texas Advanced Computing Center (TACC) Vista system; however, it is unclear whether they are H100 or H200.

As far as we know, the Jŋlich Supercomputing Center’s Jupiter supercomputer is the largest future installation. With a total of 93 exaflops of AI computation, it will contain “nearly” 24,000 GH200 superchips (apparently using the FP8 figures, but most AI still utilizes BF16 or FP16 in our experience). Additionally, it will provide 1 exaflop of conventional FP64 computation. It is based on “quad GH200” boards, which have four GH200 superchips on them.

With these new supercomputer deployments, Nvidia anticipates that over 200 exaflops of AI processing capacity will be available online in the next year or two. The whole Nvidia presentation is available to see below.

[…] NVIDIA and Amdocs Collaboration […]

[…] after announcements this fall that Oracle is installing the newest NVIDIA H100 Tensor Core GPUs, H200 GPUs, L40S GPUs, and Grace Hopper […]

[…] an initial test using the version of Llama 3 with 70 billion parameters showed that a single NVIDIA H200 Tensor Core GPU generated roughly 3,000 tokens/second, adequate to serve about 300 simultaneous […]